Automation is no longer optional—it’s essential. But with every system we streamline, new complications arise.

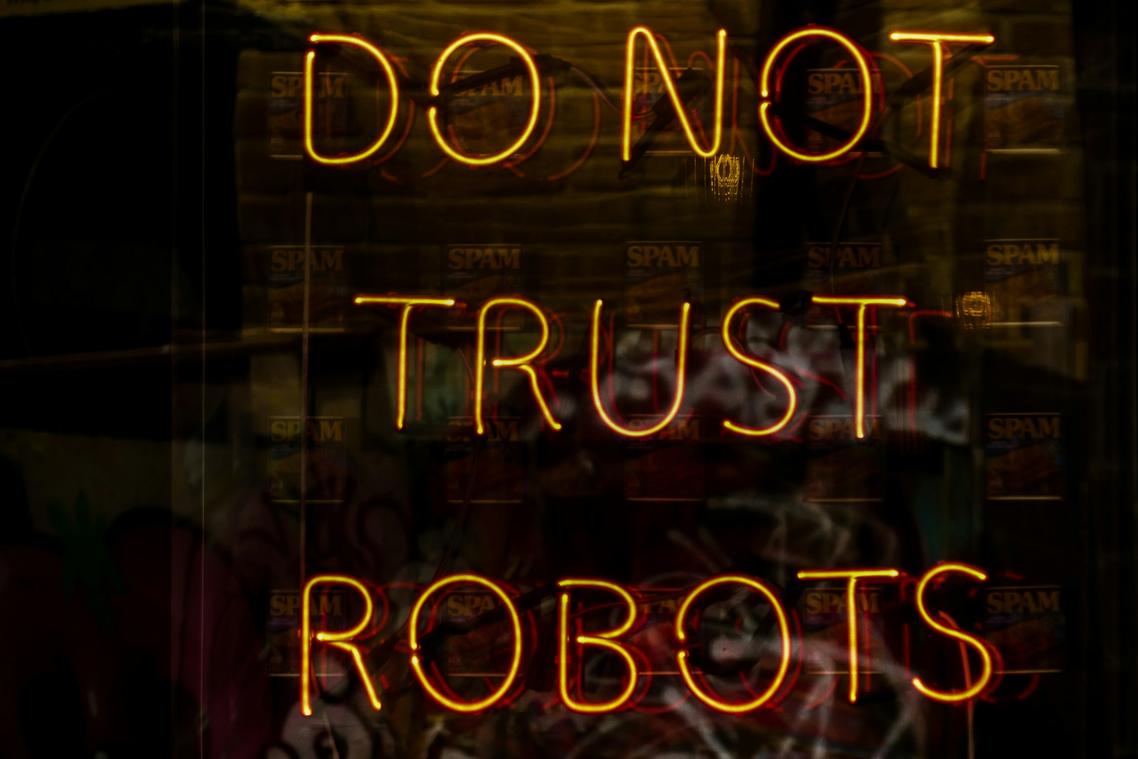

If you’re here, it’s probably because you’re trying to understand how to harness automation without crossing ethical lines. And you’re right to ask. Ignoring the risks doesn’t just hurt workers—it can erode public trust, trigger algorithmic discrimination, and leave your organization exposed to long-term reputational damage.

That’s why this article exists.

I’ve spent years working directly with the kinds of automation systems companies rely on every day. Not just talking about them—building, testing, and fixing them when they go wrong.

In this piece, I’ll guide you through the real-world challenges behind automation ethics—from biased AI decisions to the human consequences of labor displacement.

You’ll leave with a practical understanding of where the red flags are, how to spot them early, and what it takes to implement automation that’s not only efficient, but just.

The Human Cost: Job Displacement and Workforce Transformation

As we explore the ethical considerations of automating human-centered tasks, it’s essential to stay informed about broader technological trends, like those highlighted in the latest updates from our Crypto News Feed, CryptoBuzz – for more details, check out our Crypto News Feedcryptobuzz.

Let’s be honest—when was the last time you heard the word “automation” and didn’t immediately think “layoffs”?

But here’s the deeper question: Is this really just about job loss? Or are we witnessing a much broader transformation—where both manual and cognitive labor are being reshaped at their core?

From self-checkouts at grocery stores to AI-driven legal assistants (yes, those exist), it’s clear that this change isn’t coming—it’s here. Still, some argue that this is simply the price of progress. “Adapt or perish,” they say. But is it fair to expect workers to adapt without any support?

Shouldn’t companies take responsibility for the futures they’re reengineering?

That’s where the ethical mandate for reskilling enters. It’s not just a business strategy—it’s a moral stance. Ignoring the ripple effect of sudden job displacement isn’t just shortsighted—it’s negligent.

So what does a truly proactive approach look like?

- Launch internal mobility programs: Help people grow within the company instead of pushing them out.

- Roll out automation in phases: Sudden change breaks systems—and people.

- Partner with schools and training hubs: Reskill with real-world outcomes in mind.

And let’s not forget: not all automation is the enemy. Some technologies augment human effort; others replace it. That choice carries weight—and falls under automation ethics.

So… which side of the shift will you be on?

Algorithmic Bias: When Code Inherits Human Flaws

Let’s be honest—automation isn’t as neutral as it looks.

Despite sleek branding and promises of objectivity, algorithms often reflect something far uglier: us. More specifically, our historical data, our decisions, and our collective blind spots. This phenomenon is known as algorithmic bias, which happens when AI systems trained on existing data end up replicating—and even amplifying—the same social biases humans have spent decades trying to fix.

Take hiring software, for example. If it’s trained on past resumes—let’s say from an industry where leadership roles were mostly held by men—it might start downgrading resumes with female-coded names or prioritize terms associated with male applicants. (Not intentionally malicious, just tragically efficient.) Similarly, some banks have deployed credit models that unfairly score applicants from certain zip codes lower, simply because those neighborhoods historically had lower approval rates.

Now, how does this keep happening? Three key culprits:

- Flawed training data that carries forward inequality.

- Development teams lacking diverse perspectives, leading to blind spots.

- Assumptions built into the code, often unconscious, that reinforce status quo dynamics.

So, what can we do about it?

Here’s what we recommend:

- Conduct regular algorithmic audits to spot hidden biases early.

- Use representative and inclusive data sets, not just the ones that are easiest to collect.

- Embed human-in-the-loop oversight for decisions involving real-world impact. (Pro tip: having real people double-check key outputs isn’t outdated—it’s smart.)

At the end of the day, automation ethics isn’t just a buzzword—it’s a survival requirement for tech that serves everyone fairly.

The Black Box Problem: Transparency and Accountability

Let’s not sugarcoat it: most automated systems today are black boxes—we know what goes in and what comes out, but the magic in the middle? Not so much. And that becomes a big problem when these systems misfire in high-stakes situations like criminal justice, loan approvals, or medical diagnostics.

First, the obvious question: who’s responsible when AI gets it wrong? Some say it’s the developer (“they wrote the code”); others blame the company deploying it; and some even shrug and point to the algorithm itself (as if it’s a sentient being with a lawyer). But here’s the benefit of tackling this head-on: clear accountability protects everyone. By defining ownership early, organizations avoid legal ambiguity and public backlash, should their systems go off the rails.

That’s where Explainable AI (XAI) enters the chat. XAI refers to AI systems designed so that humans can understand how and why they make decisions. In sectors governed by compliance and regulation, this kind of visibility isn’t just helpful—it’s required. For example, the EU’s GDPR stresses the “right to explanation” in algorithmic decisions (source: GDPR, Article 22). Pop culture moment? Think of Westworld’s Dolores demanding “free will.” No one wants their life shaped by a system no one understands—not even its maker.

Here’s a quick breakdown of why explainability pays off:

| Benefit | What It Means for You |

|---|---|

| Informed Oversight | Faster identification and correction of system errors |

| User Trust | Increased adoption from customers and stakeholders |

| Regulatory Compliance | Reduced liability and audit-ready operations |

| Future-Proofing | Easier upgrading and retraining of AI systems down the line |

Pro tip: Incorporating transparency doesn’t mean giving away competitive secrets. Instead, focus on making the decision-making process auditable and interpretable—like a readable recipe, not an unsolvable riddle.

In the end, putting policies in place that support transparency isn’t just about covering your legal bases—it’s foundational to user trust and long-term viability. In the realm of automation ethics, accountability isn’t optional; it’s the operating system for tomorrow’s technology.

And if you’re curious how AI decision-making architectures can be structured for success, try exploring the role of ai agents in automation architecture.

Data Privacy and Surveillance: The Unseen Consequence

Think of automation as a high-performance car—it’s sleek, efficient, and fast. But under the hood? It runs on fuel made of something incredibly personal: your data.

Most advanced automation systems don’t just “know” what to do; they learn by analyzing mountains of user behavior, preferences, and patterns. That’s where privacy concerns come roaring in. This isn’t just a question of legality—it’s about moral stewardship. Sure, regulations like GDPR set important boundaries, but ethical data handling means we go further. It means collecting only what’s necessary (data minimization), ensuring clarity of intent (purpose limitation), and truly asking—not assuming—user consent.

And in the workplace? Automation can easily shift from helpful to Orwellian. Using software to track clicks, keystrokes, or idle time may sound efficient, but it risks fueling a culture of fear. (Trust doesn’t come with a timestamp.) Balancing productivity and respect is the cornerstone of automation ethics—not just making things work, but making them right.

Building a Future of Responsible Automation

You came here to understand the real issues behind the rise of automation—and now you do.

This article has shown that the biggest threat isn’t automation itself, but how we choose to use it. The real challenge lies in ignoring the automation ethics that guide implementation. Job losses, unchecked bias, vague accountability, and privacy violations aren’t technological inevitabilities—they’re human choices.

But there’s a better way forward.

A proactive, people-first approach to automation can unlock its full potential—without leaving fairness, transparency, or your workforce behind. When ethics lead, long-term efficiency follows.

Here’s what you should do next: Start a conversation in your organization today. Build a formal ethical framework to guide every automation decision from here on out.

This isn’t just best practice—it’s how leading companies stay trusted, future-ready, and profitable. The industry is shifting. Don’t wait until it’s too late to act.