Your team is drowning in tools.

You click between five dashboards just to find one log. Incidents take hours. Not minutes.

To resolve. You’re always reacting, never ahead.

I’ve seen this exact pattern across healthcare, finance, and logistics teams. Same symptoms. Same exhaustion.

It’s not about adding another tool. It’s about stopping the chaos.

This article explains what Togtechify actually does (and) why it’s different from every other platform promising “unified operations.”

I’ve built and scaled technical infrastructure for companies that can’t afford downtime. Not as a consultant flying in for a week. As someone who stayed long enough to fix the root causes.

You want to know: Is this real? Does it fit my stack? Will it change how my team works tomorrow?

Yes. Yes. And yes.

If you care about outcomes over optics.

No buzzwords. No vague promises. Just how it works.

Where it fits. Where it doesn’t.

You’ll walk away knowing whether Togtechify solves your problem. Or just adds noise.

That’s the only thing worth reading.

Togtech Solutions: Not Another Dashboard

Togtech Solutions is a unified platform. It ties infrastructure monitoring, automated remediation, and cross-team collaboration into one workflow. Not a dashboard you stare at, and not a ticketing tool that just moves work around.

I’ve watched teams waste hours chasing alerts across five tools. Togtech fixes that.

It’s not ITSM. It’s not observability. Those tools wait for you to interpret data.

Togtech uses context-aware triggers. So an alert from a payment service doesn’t just ping your NOC. It checks latency, recent deploys, and error rates before it decides who gets notified.

And how urgently.

The low-code workflow builder means SREs don’t beg engineers to write scripts. And DevOps doesn’t have to translate runbooks into Jira tickets.

Shared runbooks. Real-time status sync. Role-specific views.

That’s how it bridges DevOps, SRE, and NOC (not) with meetings, but with shared context.

One midsize fintech cut MTTA by 62%. How? Auto-prioritized alerts sent the right person the right info (no) manual triage.

You’re probably thinking: Does this actually replace what we already use? Yes. If what you use doesn’t act on context.

Togtechify is where that starts.

No more guessing which tool owns the problem. The platform owns the resolution.

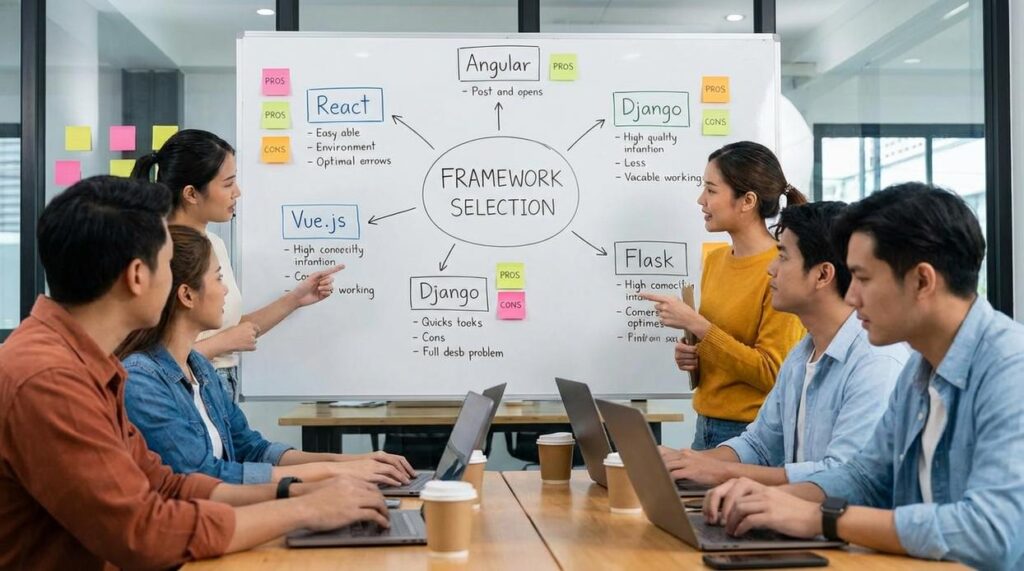

What Actually Works: Togtech’s Three Real Tools

I’ve watched teams drown in alerts. Then they try Togtech.

Adaptive Alert Intelligence is not another threshold slider. It watches how your team actually responds (not) what some engineer guessed last year. It knows when your SREs are already overloaded.

It knows which services keep the CFO awake. It drops noise before it hits Slack.

You ask: does it learn? Yes. But not slowly.

It uses your real history (not) synthetic test data.

One-Click Remediation Orchestration runs scripts that already passed your tests. Not theory. Not “maybe.” Across AWS, your old Windows server, and Salesforce.

Same button. Every action logs who ran it, when, and what changed.

(Pro tip: audit-ready logs mean you skip the 45-minute post-mortem explanation.)

The Operational Knowledge Graph connects things no one tagged. An outage hits. It pulls up the config change from Tuesday, the ticket where this exact error was fixed in March, and the downstream service nobody remembered depended on that API.

No tagging required. No weekly “knowledge sync” meetings.

All three work out of the box. No extra license. No consultant holding your hand.

You’re not buying modules. You’re turning on features.

Togtechify isn’t magic. It’s just what happens when tools stop pretending to be smart (and) start acting like they’ve been watching you for six months.

Most platforms make you choose between speed and control. Togtech doesn’t ask you to pick.

Who Should Jump In (and) Who Should Pause

I’ve watched teams force Togtechify into places it doesn’t belong. It backfires every time.

Engineering teams managing hybrid infrastructure? Yes. Companies with 50+ engineers scaling fast?

Yes. Orgs juggling three or more mission-key SaaS tools? Also yes.

But if your CI/CD pipeline is still a Slack message and a manual roll out? Wait.

If you don’t have centralized logging. Or worse, you’re still grepping through local logs on five different servers? Not yet.

If no one owns incident response, or it’s just “whoever’s online at 3 a.m.”? Don’t touch it.

Trying it without defined success metrics is like tuning a guitar while wearing noise-canceling headphones. You’ll think it sounds fine (until) the first outage.

Measure reduction in repeat incidents. Not alert volume. Not dashboard uptime.

Repeat incidents.

Skipping workflow mapping before rollout? That’s how you get ops teams ignoring alerts because they don’t match reality.

Underestimating change management? That’s how you get 87% adoption on paper. And zero real usage.

Togtechify World Tech News From Thinksofgamers covers real cases like this. Not theory. Actual war stories.

You need ownership. You need clarity. You need to know what “done” looks like.

Otherwise, you’re just adding noise.

And noise doesn’t scale.

Real Implementation: What Onboarding Actually Feels Like

I’ve watched ten teams go through this. Not the sales demo version. The real one.

And get a baseline health score. No fanfare. Just numbers appearing where they weren’t before.

Week 1 is quiet. Just data flowing in. You see your systems talk to Togtechify (logs,) metrics, traces.

Week 2 gets loud. Alert rules start firing. We tune them with you.

Not for you. First two auto-remediations run live. One fixes a stale AWS Lambda timeout.

Another clears a stuck GitHub Actions queue. (Yes, both happened last Tuesday.)

Engineering leads spend under two hours total that week. SREs? About an hour a day.

That’s it.

Week 3 is hands-on. Team training. Custom dashboards built while you watch.

We co-write the runbooks (no) templated fluff.

Week 4 is handoff. KPI review. You walk away knowing what “healthy” looks like for your stack.

Out-of-the-box integrations: Datadog, PagerDuty, AWS CloudWatch, ServiceNow, GitHub Actions. None need custom code. Just auth tokens and permissions.

Post-launch? Thirty days of escalation support. Real humans.

Not chatbots.

You’ll know it worked when your on-call pager stops buzzing at 3 a.m. for things that fix themselves.

Metrics That Don’t Lie

I track four things. Not ten. Not twenty.

Four.

% reduction in manual triage time. This isn’t about speed. It’s about your team not staring at logs at 2 a.m.

Less triage means faster releases. Period.

Increase in auto-resolved incidents? That’s burnout insurance. Fewer tickets piling up.

Fewer people quitting.

Decrease in cross-team handoffs per incident? Every handoff is a delay. And a miscommunication waiting to happen.

MTTR for tier-2+ issues dropping? That’s real money. Downtime costs scale fast.

And this one cuts straight to the bill.

Don’t waste time on “uptime %” or “number of alerts.” They’re noise without context. (Like counting raindrops during a flood.)

If your team spends more than 15 hours a week manually correlating alerts, Togtechify should cut that by ≥70% within 60 days.

That’s the baseline. Not aspirational. Actual.

Clarity Starts Where Chaos Ends

I’ve seen what wasted engineering hours do to teams. They burn out. They miss deadlines.

They blame each other.

Inconsistent incident handling? That’s not process. It’s guesswork with consequences.

Technical debt hiding behind ten tools? That’s not scale. It’s surrender.

Togtechify doesn’t just show you the mess. It gives you shared context. Real automation.

A path that actually fits your team (not) some consultant’s fantasy.

You’re tired of pretending everything’s under control.

So am I.

Download the free Operational Readiness Checklist. It takes two minutes. It tells you—honestly.

If your team is ready, or if you’re about to waste another quarter.

Most teams aren’t ready. Yours can be.

Clarity isn’t optional anymore. It’s your next competitive advantage.